847 Alarms at 4 AM

At 4:00 AM on March 28, 1979, a pressure relief valve stuck open at Three Mile Island Unit 2. What followed was the most studied nuclear accident in American history, and it had almost nothing to do with the valve.

The reactor's safety systems did exactly what they were designed to do. The SCRAM triggered. Emergency coolant activated. Alarms sounded.

All of them. At once.

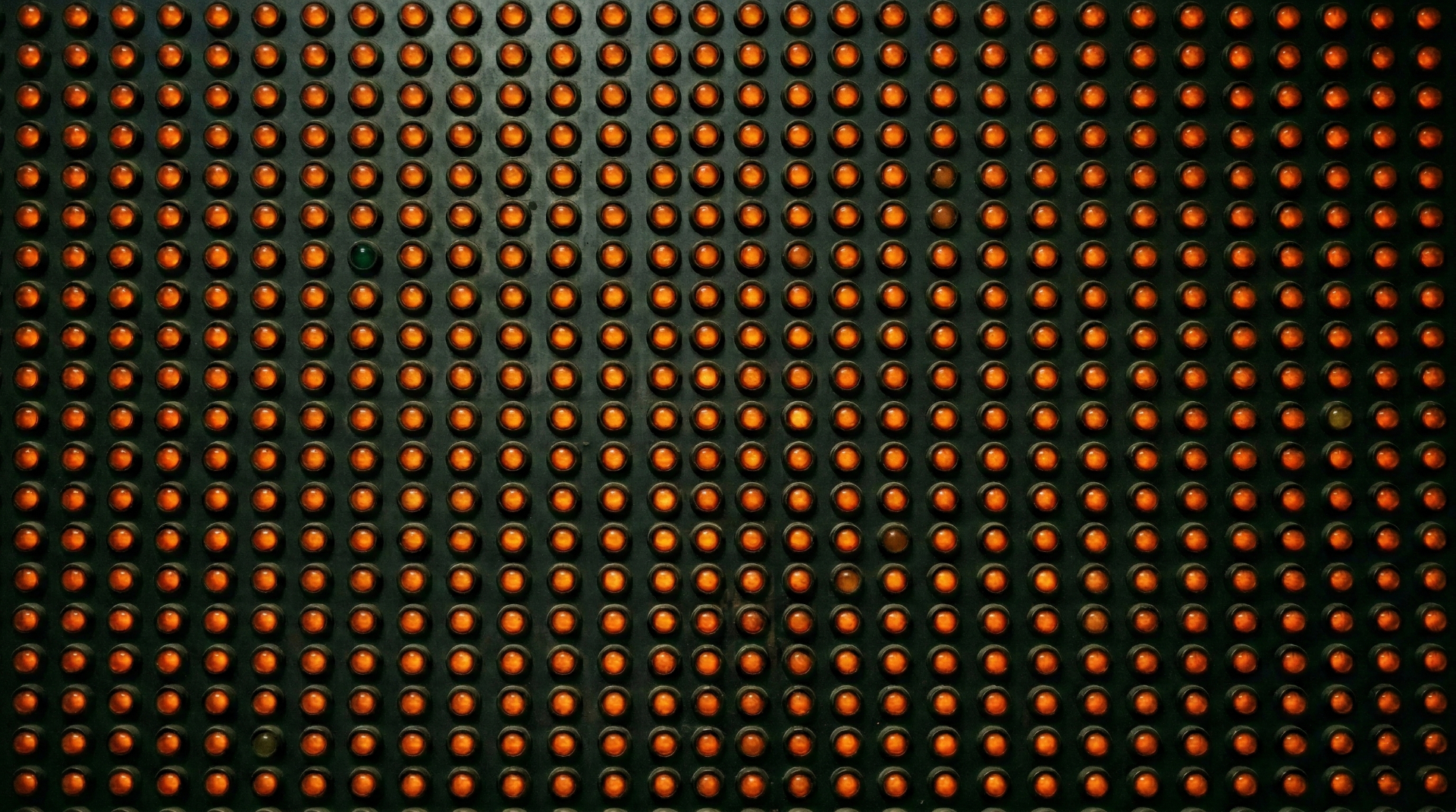

Over 100 alarms fired in the first few minutes. The control room had no alarm prioritization. No way to suppress low-relevance alerts or separate the critical from the routine. Every indicator screamed with equal urgency: the critical and the routine, the cause and the symptom, the thing that mattered and the hundred things that didn't.

The operators stood in front of a wall of flashing lights and had to answer one question: what do we fix first?

They got it wrong. Instruments showed high water levels in the pressurizer, and the operators, unable to distinguish cause from symptom in the flood of alarms, concluded the reactor had too much coolant. They turned off the emergency cooling system. The reactor was actually losing coolant through the stuck valve. They had shut off the one thing keeping the core alive. Within hours, it partially melted.

The defense system worked. The defense system's output caused the meltdown.

847 alarms at 4 AM

Replace the control room with a Slack channel. Replace the flashing lights with a vulnerability report. Replace the 100 simultaneous alarms with 847 CVEs.

A security scanner runs against 12 production clusters. It finds 847 vulnerabilities. It scores each one with CVSS. It produces a report. It sends the report to the platform team.

The platform team has three engineers.

The report tells them everything and nothing. It lists every CVE but not which ones are reachable from the internet, not what breaks if a given service is compromised. It does not tell them what to fix first.

So the engineers do what the TMI-2 operators did. They stand in front of the wall of flashing lights and start guessing. Manual correlation. Spreadsheets. Tribal knowledge about which clusters matter more.

This is a methodology problem. Not staffing, not tooling. The distinction matters, because better tools built on a broken methodology will reproduce the same failure at higher resolution.

The valve indicator problem

The pressure relief valve was stuck open. Coolant was draining from the reactor. But the indicator on the control panel didn't show whether the valve was open or closed. It showed whether the valve had been commanded to close.

The command had been sent. The indicator showed "closed." The valve was open. The operators trusted the indicator.

CVSS scores have the same problem. A CVSS score, even supplemented by EPSS or KEV data, tells you how exploitable a vulnerability is in theory, under laboratory conditions, absent any context about your environment. It tells you the command was sent. It does not tell you the state of the valve.

A CVE with a CVSS score of 9.8 on an air-gapped internal build server with no inbound network paths is not a 9.8 in your environment. A CVE with a score of 5.3 on a public-facing service that chains with two other medium-severity issues to reach your database? That might be your actual 9.8.

CVSS measures theoretical exploitability. It says nothing about whether an attacker can reach the service, whether this CVE chains with others into a viable attack path, or what happens downstream if the service is compromised.

Calculating risk from CVSS alone is like calculating insurance premiums from the probability of a hurricane without checking whether the house is in Kansas or on the Florida coast.

The TMI-2 operators didn't lack data. They were drowning in it. What they lacked was a model that connected the data to reality.

The real failure mode: risk displacement

Most organizations handle vulnerability management the same way TMI-2 handled its alarms.

The reactor's alarm system was designed by one team. The control room was operated by another. The alarm designers built a comprehensive system: every possible anomaly would trigger a notification. Complete coverage. Nothing missed.

They were right. Nothing was missed.

The problem was that "nothing missed" and "useful to the operator" are not the same thing. The alarm system's completeness became the operator's paralysis. The designers had optimized for their metric (coverage) and displaced the actual hard problem (prioritization) to someone else.

This is exactly what happens when a security team runs a scanner, generates a report of 847 CVEs, and sends it to the platform team. The security team's job, by their own metrics, is done. Complete coverage. Nothing missed.

The platform team now owns the triage. They have the list but not the context, not the topology, not the blast radius analysis. They have a wall of flashing lights and a reactor that needs attention now.

Call it what it is: risk displacement. The burden of analysis moves from the team that understands threats to the team that doesn't have the tools or the mandate to prioritize them.

The TMI-2 alarm system didn't protect the operators. It made their job harder. The scanner report doesn't protect the platform team. It creates work and calls it security.

What the nuclear industry learned

After TMI-2, the nuclear industry redesigned the entire alarm methodology.

The reforms introduced alarm prioritization: suppress low-relevance notifications during high-stress events. They added contextual displays that show operators the state of the system rather than a list of deviations from normal. And they formalized alarm rationalization, determining which alarms matter under which conditions, and what the operator should actually do about it.

More alarms do not mean more safety. An alarm system that fires 100 alerts when 3 are critical is worse than one that fires 3. The operator's attention is finite, and every irrelevant alarm steals cognitive resources from the ones that matter.

The nuclear industry learned that the alarm system's job is not to tell the operator everything that's wrong. It's to tell the operator what to do next.

Vulnerability management hasn't learned this yet.

From lists to topology

The cybersecurity industry's response has been to build better alarms. The scanner now produces a sorted list instead of an unsorted one. It adds a risk score. Maybe it cross-references with the CISA KEV catalog or flags "actively exploited in the wild."

These are improvements, not solutions. You can't meaningfully sort 847 CVEs without understanding the topology they exist in. Sorting requires knowing which services are reachable, what they connect to, and what breaks if they're compromised. That knowledge doesn't live in a scanner. It lives in the relationships between assets.

A sorted list of CVEs is still a list. You can't ask a list "what's the shortest path from the internet to my database through these vulnerabilities?"

That's a graph question. Your assets, services, and identities are nodes. The connections between them, network paths, trust relationships, data flows, are edges. Vulnerabilities attach to nodes, but exploitability is a function of the path, not the node.

The 3-person platform team managing 12 clusters doesn't need a better list. They need answers to questions a list can't answer:

- Which of these 847 CVEs sit on services reachable from the internet?

- Which of those services connect to data stores with customer data?

- If this service is compromised, what's the shortest path to a critical asset?

- Which three patches would eliminate the most attack paths?

In a graph, blast radius is a query, not a guess. You traverse outward from the compromised node and measure what's reachable. Prioritization is a calculation over topology: which vulnerabilities sit on the most paths to the things that matter?

The scanner flags a critical CVE on a high-profile production service. The team scrambles to patch it. Meanwhile, a chain of three medium-severity CVEs on a forgotten internal service provides a clear path to the same database. Nobody sees the chain because nobody's modeling the relationships.

The TMI-2 valve wasn't dangerous because it was stuck open. It was dangerous because it was stuck open on the path between the reactor core and the environment. Location in the topology defined the severity, not the defect itself.

The tool is the process

The nuclear industry's post-TMI redesign went further. They embedded the methodology in the control room itself. Alarm rationalization wasn't a document operators consulted alongside their instruments. It became how the instruments worked. The tool was the methodology.

This is where the "just buy better tooling" argument gets it half right. The right tool does embed methodology: reachability analysis, asset relationship mapping, exposure context should be built into how your team works, not bolted on as a separate triage step.

But a tool can only implement a methodology that exists. Reachability and exposure data don't tell you whether a compromised internal API matters more than an exposed storage bucket. That ranking comes from understanding business impact, and business impact is an organizational decision. Someone has to decide what the crown jewels are. The graph can model the paths, but the weight you assign to each destination is a business call.

The NRC understood this. Beyond the control room redesign, they mandated simulator training, licensing requirements, crew resource management borrowed from aviation. They retrained the people, not just the instruments. Because someone still has to look at the output and make the call, and that takes people who understand what the business loses if an attack path gets exploited.

Skip either step and you're back in the TMI-2 control room. A tool without methodology is a fancier wall of flashing lights. A methodology nobody follows is a PDF on a SharePoint nobody opens.

Before you send the next report

The operators at TMI-2 were trained, competent, and trying their best. They still turned off the one system keeping the reactor alive. The information architecture made the right decision invisible and the wrong decision obvious.

Before you send the next vulnerability report, ask yourself: am I giving my platform team a decision, or am I giving them a wall of flashing lights?

The nuclear industry answered that question in 1979. It cost them a reactor.

What's it costing you?

Historical note: The Three Mile Island Unit 2 reactor was never restarted. Cleanup took 14 years and cost approximately $1 billion. The President's Commission on the Accident (the Kemeny Commission) concluded that the primary cause was not mechanical failure but "human factors," operator confusion compounded by inadequate instrumentation and training. The control room's alarm system was specifically cited as a contributing factor. Unit 1 continued operating until 2019.

This article originally published on Medium.