Designing for compromise: a multi-tenant SaaS architecture on AWS

Multi-tenant SaaS on AWS has a gravitational pull. The defaults are well-known: a shared VPC, a central management plane with broad IAM permissions, one Cognito pool with custom attributes for tenant isolation, a single Terraform state file that describes everything. It works and it ships fast, but it creates a system where the compromise of one component can reach every customer.

This is the first article in a series about an architecture that takes a different path — and about why this particular platform demands it.

The platform is a threat modeling tool. Customers use it to map their attack surfaces, model attack paths through their infrastructure, catalog their defenses, and analyze where their security architectures are weakest. The data inside each workspace is, by definition, a detailed roadmap of that organization's vulnerabilities. Where the entry points are. Which assets are reachable from which attack paths. What's defended and what isn't.

A cross-tenant data breach on a typical SaaS platform exposes user records or business data. A cross-tenant breach on a security platform hands one organization's complete threat model to another party. The stakes are categorically different, and they demand a level of infrastructure isolation that shared-everything multi-tenancy cannot provide.

The series will go deep on every component: Terraform modules, IAM policies, container configurations, cost breakdowns. This article starts from the top: the motivation, the design philosophy, what the resulting system looks like, and how a customer goes from sign-up to a running workspace.

The problem with shared everything

Infrastructure isolation isn't absent in modern cloud architectures, but it's typically functional. AWS multi-account strategies, landing zones, and hub-and-spoke models separate workloads along functional boundaries: a security account, a logging account, a networking hub, separate environments for dev, staging, and production. This is valuable. It means a compromised application or database doesn't take down visibility: monitoring remains intact in its own account. A dev environment can't touch production resources.

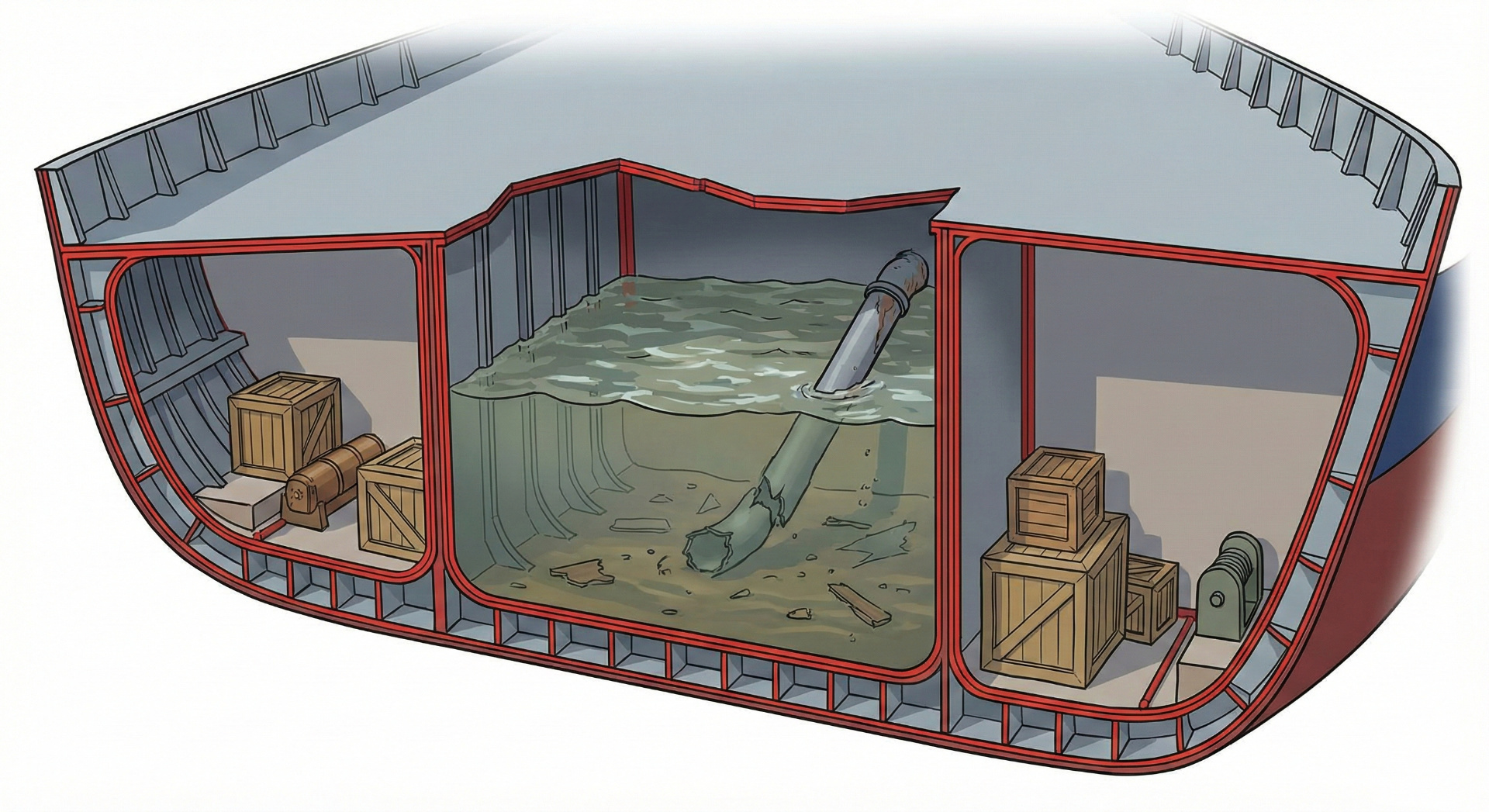

But within the production workload, all customers still share the same VPC, the same database engine, the same compute, the same management plane. The isolation between Customer A and Customer B happens in the application layer: tenant ID filters on database queries, middleware that checks ownership, row-level security policies. Infrastructure isolation per customer is rare.

For most SaaS products, application-level tenant isolation is entirely adequate. And at scale (millions of users, thousands of tenants), per-customer infrastructure isolation isn't an option. The operational overhead, the cost, and the AWS service limits themselves (VPCs per region, Cognito pools per account, subnets per VPC) make it prohibitive. Application-level isolation is the only viable model at that scale, and it serves most platforms well.

But the data on this platform isn't profile information or project files. It's security architectures, attack graphs, defense mappings, and vulnerability analyses. The cost of a cross-tenant leak isn't a privacy incident. It's handing an attacker the blueprint to an organization's defenses.

A compromised provisioner doesn't check tenant IDs before accessing an S3 bucket. A leaked IAM credential doesn't respect row-level security. When the application layer is bypassed, the infrastructure's boundaries are what remain, and in a shared-everything architecture each shared layer is a path between customers. A shared VPC means network-level reachability. A shared Cognito pool means a misconfiguration can leak tokens across tenants. A shared management plane means the most privileged component in the system can touch every customer's resources. A single Terraform state file means an operational error (an accidental terraform destroy, a corrupted state during concurrent apply) affects everyone.

The conventional response is to add more application-level checks, more middleware, more filters. For a platform that holds threat models and attack surfaces, this architecture takes a different approach: remove the shared paths entirely.

Design for compromise

The question this architecture starts from is not how do we prevent unauthorized access between tenants? It is: if a component is compromised, what can it reach?

When the answer to that question could be "another organization's complete threat model," the architecture cannot afford to rely on application-level enforcement alone.

Prevention is about making the walls strong. Containment is about making the walls narrow. Prevention fails when any single layer is bypassed. Containment holds even when a layer is fully compromised, because the compromised component simply cannot reference another customer's resources. The reference doesn't exist.

The strategy is structural isolation. Each customer gets their own network segment, their own identity provider, their own storage, their own compute, their own deployer, their own databases. Not through application logic that filters by tenant, but through infrastructure boundaries that make cross-tenant access impossible. Separate subnets. Separate IAM roles that reference only one customer's resources by ARN. Separate Cognito pools. Separate Terraform state files. Separate deployers that can assume only one customer's provisioner role.

The cost is more of everything: more IAM policies, more Cognito pools, more Terraform state paths, more deployers. The benefit is that a compromise is contained, structurally, to one customer.

Everything else in the architecture follows from this premise.

What each customer gets

When a customer deploys their workspace, the platform provisions a complete, isolated environment:

Each customer gets a /28 private subnet within the customer VPC. No instance has a public IP. Inbound traffic arrives exclusively through a dedicated CloudFront distribution, which connects to the subnet over the AWS backbone via a VPC Origin. Authentication runs through a dedicated Cognito User Pool with its own password policy, MFA configuration, and token settings. A bug or misconfiguration in one pool cannot leak data to another.

Each customer gets dedicated compute. On the Consultant tier, a single EC2 instance. On higher tiers, a K3s cluster per customer with rolling updates and redundancy. The blast radius boundary is the same.

The Consultant tier has the harder engineering problem: delivering the same isolation and manageability at a price point where Kubernetes isn't justifiable. The instance must be reproducible, immutable, and updatable without SSH or manual intervention.

Fedora CoreOS solves this — a container-optimized Linux with a read-only root filesystem, no package manager, and no SSH. The entire OS configuration is defined in a Butane YAML file, compiled to Ignition JSON, uploaded to S3, and fetched by the instance at first boot. The containers run under systemd on a Podman bridge network (an isolated container network on the host). When the configuration changes, Terraform replaces the entire instance. The persistent EBS data volume is detached and reattached; databases survive. The root volume is destroyed and recreated from scratch. There is no configuration drift.

Two Route53 zones for the same domain create a split-horizon DNS: a public zone pointing at CloudFront and a private zone for internal service discovery. The instance can write TLS challenge records to the public zone but cannot touch routing records or the private zone. Storage is two S3 buckets (frontend assets and internal configuration) with public access blocked and encryption enabled, plus the persistent EBS data volume for databases.

Each customer's Fargate deployer has an IAM role that can do exactly one thing: assume this customer's provisioner role. That role references only this customer's resources by hardcoded ARN, never wildcards. Where AWS supports condition keys (record types, resource tags, source IPs), they further narrow what each role can do. The Terraform state file is also per-customer.

The architecture from above

The full deployment includes the expected functional isolation (separate accounts for security, logging, and monitoring) but this overview focuses on the workload architecture: the infrastructure that runs customer environments.

The workload runs in two VPCs within a single AWS region.

The management VPC hosts shared platform functions: the registration and payment Lambdas, account provisioning, the Fargate deployers that run Terraform, bastion access, and monitoring.

The customer VPC hosts customer workloads. Each customer's /28 subnet is allocated atomically via a DynamoDB counter; indices are never reused. AWS service limits cap the number of customers per account, but the architecture doesn't try to maximize that number. It scales through a cell-based model: each cell is a separate AWS account with its own pair of VPCs, its own service limits, and its own blast radius boundary. Cells isolate groups of tenants from each other at the account level. This is a second containment boundary, on top of the per-customer isolation within each cell.

VPC peering connects the two networks within a cell, with DNS resolution enabled in both directions. The split means a compromised customer instance has no network path to the management plane, and a compromised deployer has no persistent presence in the customer VPC.

From sign-up to running workspace

The shared platform infrastructure (VPCs, peering, NAT, container registries, shared IAM roles) is provisioned once by the platform operator. What's specific to this architecture is what happens when a customer arrives. The provisioning pipeline is a sequence of Lambda functions, each running as a single compiled binary inside a minimal FROM scratch container: no runtime, no shell, no dependencies beyond the binary itself. Each invocation starts clean, runs one function, and exits. The pipeline has four stages, with gates between them.

Registration is itself a queue-based, multi-step flow. The sign-up form creates a DynamoDB record and sends a verification email. Nothing else. Email verification pushes a job to SQS, which triggers a provisioner Lambda that creates the Cognito pool. Each step is isolated: the registration Lambda can write to DynamoDB and send email but cannot create Cognito pools; the provisioner Lambda can create Cognito pools but is triggered only through SQS, not directly. Failed provisioning retries through the queue, with a dead letter queue and CloudWatch alarms as a backstop. A bot submitting 10,000 fake sign-ups creates 10,000 DynamoDB records (negligible cost) and they auto-delete within two hours. No real AWS resources are created until a human verifies their email.

Payment gates the transition from a database record to real resources. The payment flow is itself isolated: Stripe webhook → API Gateway → Lambda (signature verification) → SQS → processing Lambda → DynamoDB. Each step authenticates independently. The webhook Lambda verifies the Stripe signature, the processing Lambda validates the SQS message and cross-references the session. The webhook Lambda can only push to SQS; it has no direct access to DynamoDB or any infrastructure creation capability. Until payment is confirmed, the customer exists only in DynamoDB.

Account provisioning requires three independent factors before any infrastructure is created. A triple gate: a valid JWT (proving identity through Cognito authentication), an active payment status in DynamoDB (proving Stripe confirmation), and a valid provisioning token from a magic link email (proving email access, time-bound, hashed). All three must pass independently. Only then does the platform create the customer's Cognito pool, /28 subnet, split-horizon DNS zones, per-customer IAM roles with hardcoded ARNs, and an internal S3 bucket.

Infrastructure deployment is triggered by the customer. They click "Deploy Infrastructure" in the management console. This launches a Fargate task whose IAM role can do exactly one thing: assume this customer's provisioner role. The provisioner role references only this customer's resources by ARN. It cannot discover or touch another customer's infrastructure. Terraform runs against a per-customer state file, so even a corrupted state or an accidental destroy is contained to one customer. The deployer creates the EC2 instance, data volume, CloudFront distribution, frontend bucket, TLS certificate, DNS records, and management API. The instance boots from a clean Ignition configuration fetched from S3 and stands up the full container stack.

Each stage is idempotent — re-running produces the same result.

How traffic reaches the backend

A user request to their workspace hits CloudFront first. Static assets go to S3 through Origin Access Control. API and GraphQL requests go to the VPC Origin. CloudFront connects to an ENI in the customer's subnet over the AWS backbone, injecting a secret header that proves the request came through the CDN.

The EC2 instance has no public IP, but traffic between CloudFront and the backend is still encrypted end-to-end. The instance runs a Let's Encrypt certificate obtained through DNS-01 challenge validation. No inbound port 80, no public endpoint. nginx terminates TLS, validates the secret header (rejecting anything that didn't arrive through CloudFront), and reverse-proxies to the backend. The backend connects to the graph database, vector store, and policy engine over a Podman bridge network. Nothing is exposed to the host except nginx on port 443.

Admin operations take a separate path through API Gateway and Lambda. Every step in the chain independently verifies the user's JWT and authenticates the upstream component that forwarded the request. No layer trusts that a previous one already validated.

Deliberate trade-offs and tiering

This architecture optimizes for blast radius isolation. That has consequences, and the trade-offs differ by tier.

The Consultant tier runs a single EC2 instance per customer, Podman with systemd, and instance replacement for updates. It's designed for independent consultants and small teams running threat modeling sessions, where brief downtime during updates is acceptable and single-node simplicity keeps cost and operational complexity low.

The Team and Enterprise tiers move from a single node to a self-managed Kubernetes cluster (K3s) per customer. The isolation model stays the same: per-customer subnet, per-customer IAM roles, per-customer state. What changes is the compute layer inside that boundary: one instance with Podman becomes a multi-node cluster with rolling updates and no downtime. The Terraform modules are different, but the blast radius boundary is identical. The series will cover these tiers separately.

Within the Consultant tier, the trade-offs are explicit:

No high availability at the instance level. A single EC2 instance. If it fails, the instance is replaced and the data volume survives, but there are 2-5 minutes of downtime. For threat modeling sessions, not real-time transactions, this is acceptable.

No Kubernetes. Multiple containers on one node, managed by Podman and systemd. Kubernetes would add control plane and networking complexity without corresponding benefit at single-node scale.

No shared database. Each customer runs their own graph database and vector store as containers. More expensive than a shared managed cluster, but customer data never shares a database engine with another customer. Blast radius isolation extends to the data layer.

No in-place updates. Updates have two speeds. Container image updates are fast: pull the new image, restart the service. OS-level changes (kernel, systemd units, nginx config) replace the entire instance, which means 2-5 minutes of downtime. Either way, there is no configuration drift. Every update starts from a known, clean state.

Per-customer isolation is inherently more expensive than shared infrastructure. The architecture manages this through deliberate choices across the platform: an open-source NAT instance instead of the AWS NAT Gateway, CloudFront edge locations limited to the target market, and an S3 Gateway Endpoint to avoid data transfer costs.

What comes next

This article described the shape of the architecture: the motivation, the isolation model, and how the pieces fit together. The rest of the series goes inside each component: IAM policies, Terraform modules, queue-based provisioning, split-horizon DNS, the immutable instance lifecycle, and cost. Each article will include working code, configuration, and the reasoning behind the trade-offs.

Series

- Architecture overview (this article)

- Customer isolation from the infrastructure up

- Automating isolation: the self-service deployment pipeline

- CloudFront VPC Origins: what breaks and how to fix it

- The compute stack: Fedora CoreOS, Podman quadlets, and systemd-native container orchestration

This architecture is implemented in dether.net, a graph-native threat modeling platform. If you're interested in seeing these patterns applied to security architecture analysis, that's where they run in production.